The Concept

DermaTrack is a comprehensive skin health monitoring system that combines a handheld imaging device, a mobile app, and an AI-driven platform. It captures high-resolution skin images, analyses them with AI against an extensive database of skin conditions, and provides users with easy-to-read reports, including a skin avatar and recommendations for further actions, such as treatments or dermatologist consultations.

Designed for Mass Manufacture

DermaTrack has been designed to be affordable, focusing on those manufacturing techniques that adequately balance excellent quality and precision engineering with price point and accessibility.

+ Robust Rubber Ergonomics

+ Purpose Built PCB and Camera System

+ High Quality Macro Optics Capable of Crystal Clear Imagine

+ Cast Aluminium Frame

Using an App Built for Everyone

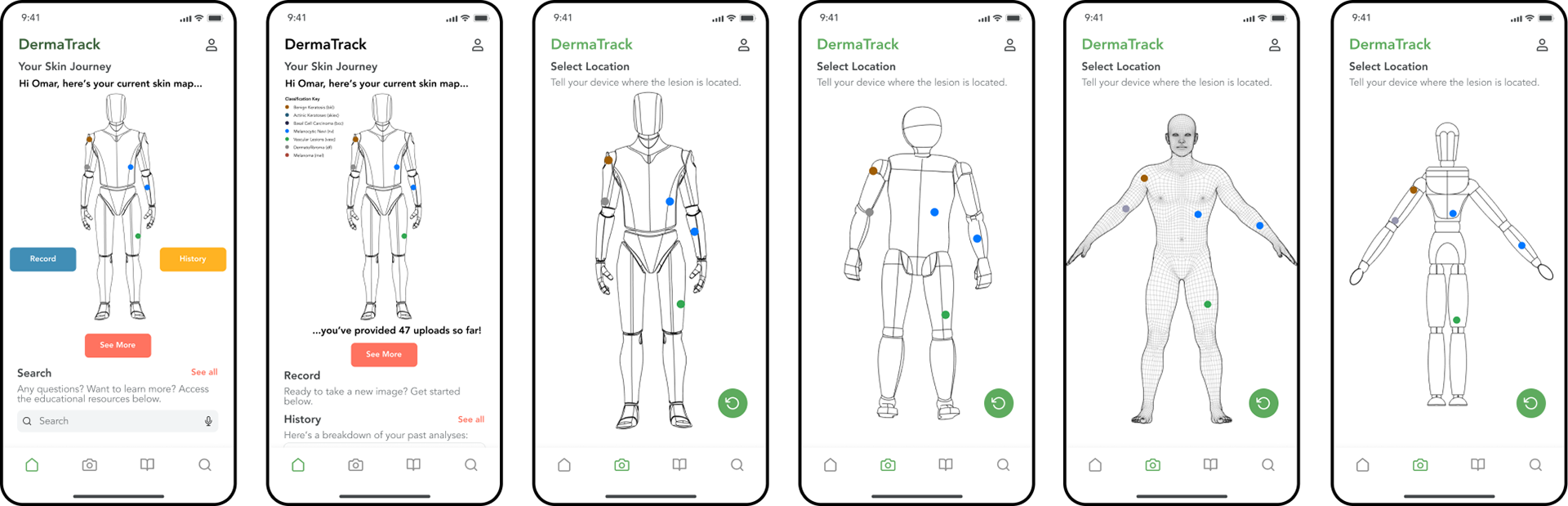

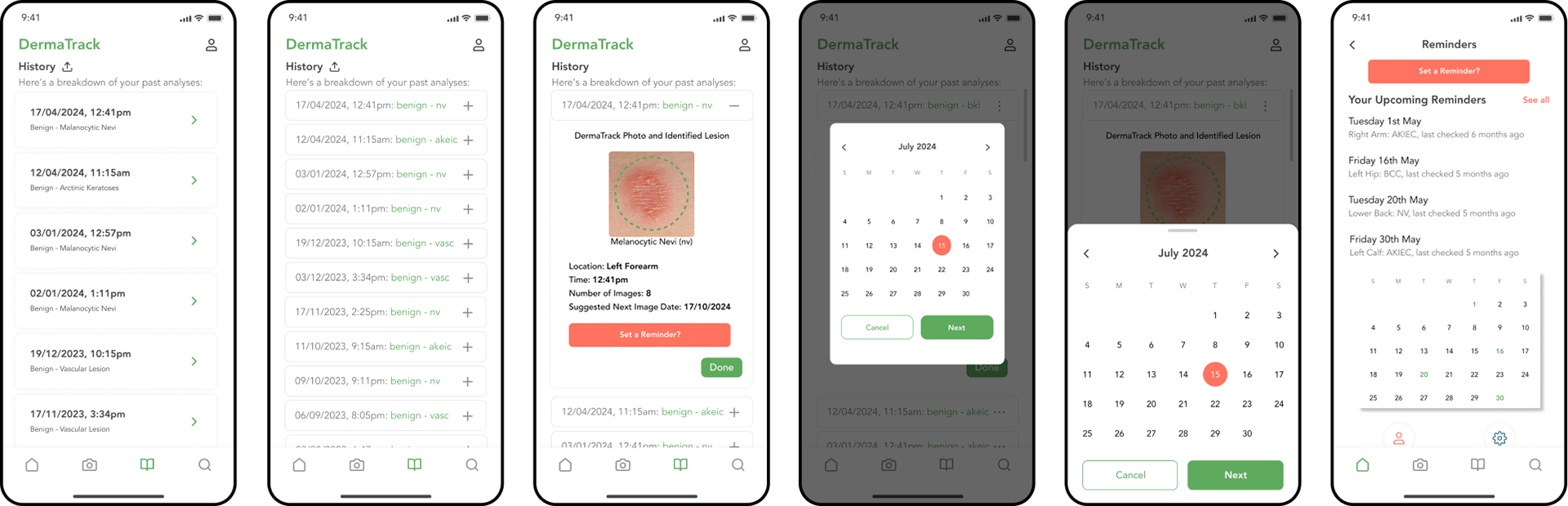

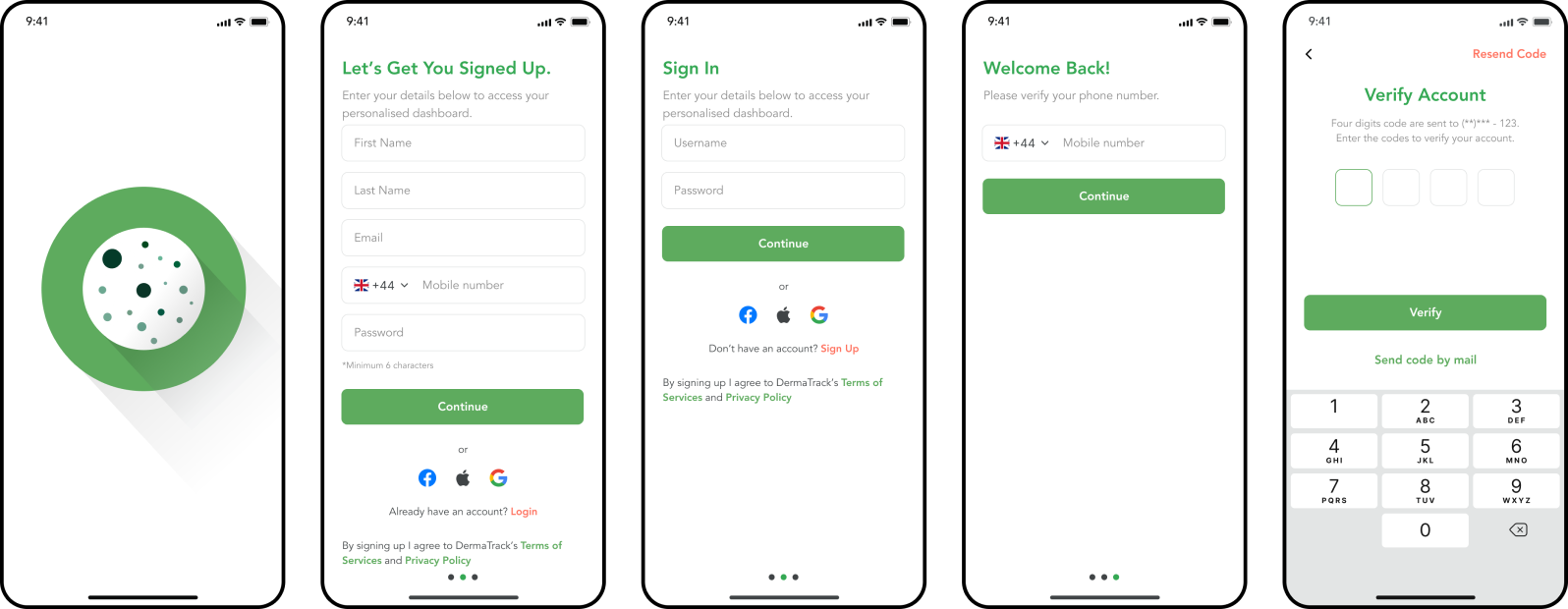

User experience is central to DermaTrack, and feedback from dermatologists and users shaped its intuitive design. The handheld device is easy to use, and the mobile app guides users through capturing images and provides instant, precise results and recommendations. DermaTrack also offers educational resources and enables remote consultations, allowing users to share pictures and AI results with dermatologists at the click of a button. This enhances access to professional care.

+ Educational Resources

+ Tracking and Digital Avatar Capabilities

+ Emergency Medical Support

+ Intuitive User Interaction

Intelligent Algorithms

DermaTrack operates on two foundational algorithms developed to leverage machine learning and artificial intelligence. These algorithms can diagnose and categorise all skin lesion types with a cancer prognosis and probability, informing users fully.

+ More Accurate than Medical Professionals

+ Power Estimation

+ Emergency Medical Support

Intuitive Interactions

Rigorous testing has been conducted to ensure all components of the DermaTrack system are stylish, convenient, and intuitive for those unfamiliar with the medical domain.

+ Automated Distance, Angle, and Orientation Indicators

+ Self-Focusing Camera System

Purpose-Built Add-Ons

Reaching hard-to-reach areas of the body is, by default, complex. As a result, the DermaTrack team initiated two handle mechanisms specifically designed to help users reach areas such as the back, neck and scalp. Each handle can be slotted into the friction-fit socket at the bottom of the device, providing easy access via the tips of the fingers or attachment to a standard gimbal mechanism.

What's in the Box?

1 x DermaTrack Device

1 x Electromagnetic Charging Dock

2 x Swappable Handles

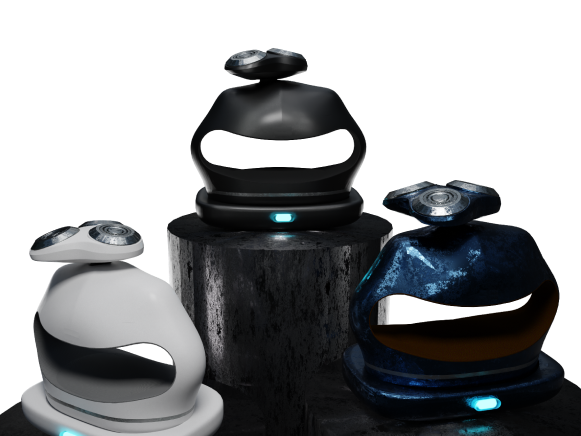

Colourways

Imperial Grey - Shamrock Green - Onyx Black - Buff Beige

Understanding the Current Market

The basis of DermaTrack is solving a daily or weekly problem that the ordinary person can relate to. Understanding the typical journey a user takes when dealing with their skin was paramount to providing a valuable intervention. Doing this established the severe NHS wait times, insecurity regarding self-evaluation, and lack of preventative treatment options for those at risk.

Establishing the Project Scope

Although a product aimed at comprising a comprehensive solution, DermaTrack acknowledged the time and cost constraints present within a 6-month thesis. Therefore, biometric and other data sources were excluded from the project scope, providing a basis for future work as DermaTrack continues to develop into 2025+.

Creating a Product Vision

Using the system visualisation as a basis for development, a use case was created. Use cases are an established strategy for modelling requirements of a system, providing a basis for further design analysis via aspect orientated partitioning.

Ideating on a user journey allowed an established intention when designing individual components. A vision for a final product was loosely established.

The Device

The physical device is instrumental in providing the DermaTrack system with high-quality data, serving as a foundational component of the system's data acquisition process. Ultimately, the device output serves as a novel skin-tracking input device with innovative image consistency, quality, and user experience features. This device is also the first of its kind to focus on ergonomics when photographing hard-to-reach areas of the body.

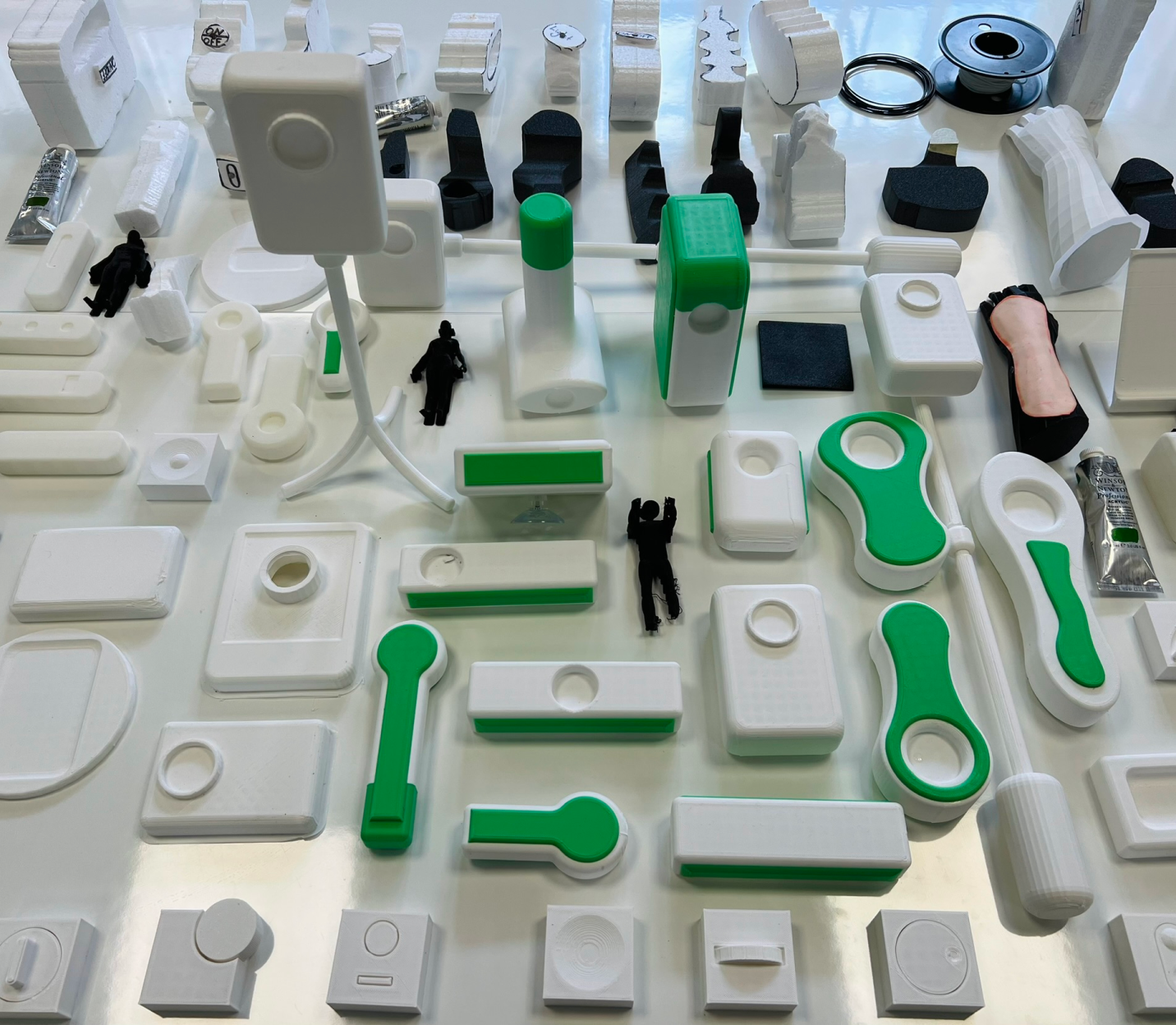

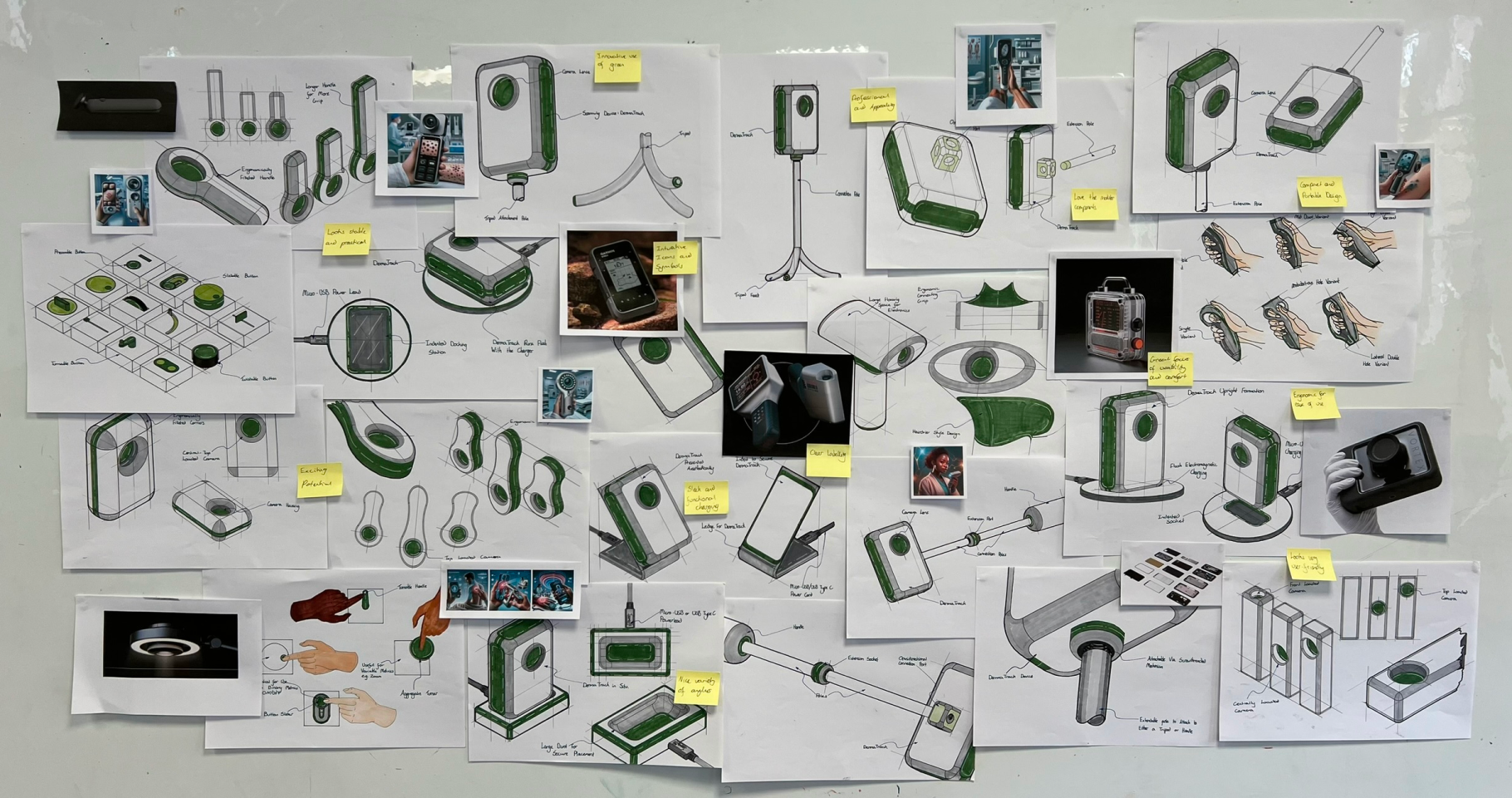

Rapid Prototyping

Annotated sketches and mood boards facilitated ideation on adjustability, user interaction, shape, size, and ergonomics to enhance intuition and comfort. Sketches serve as a valuable ideation strategy for rapid, iterative development in user-centred design. In contrast, moodboards provide context and inspiration. The use of generative AI to produce moodboard images was tested to utilise state-of-the-art techniques and technologies throughout the development process.

Physical prototyping was employed as a mixed-media process that provided further insight into anthropometrics, ergonomics, and proportions. Physical prototyping bridges the sensory gap left by 2D processes and has been shown to yield more reliable feedback and more successful outcomes.

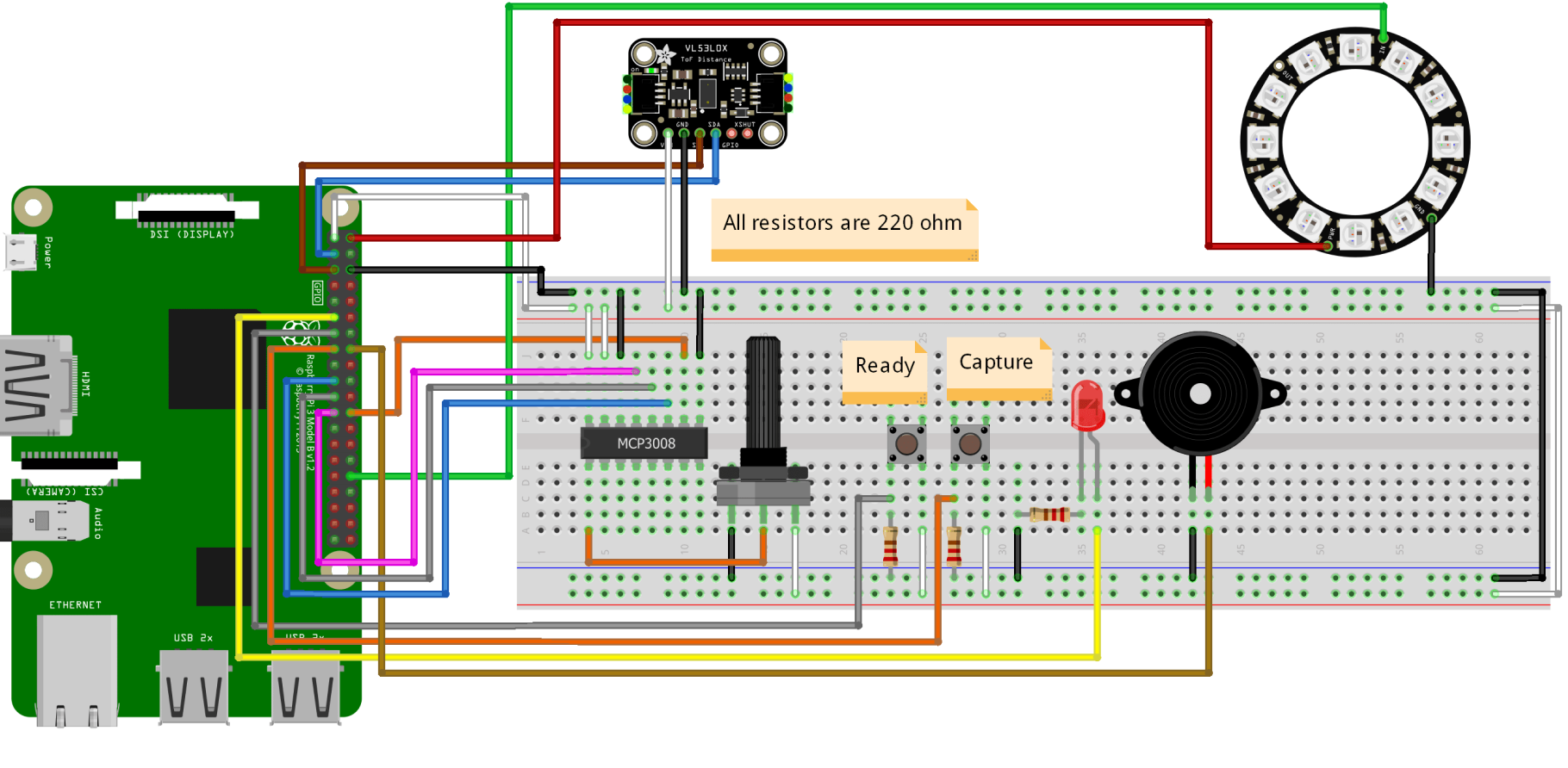

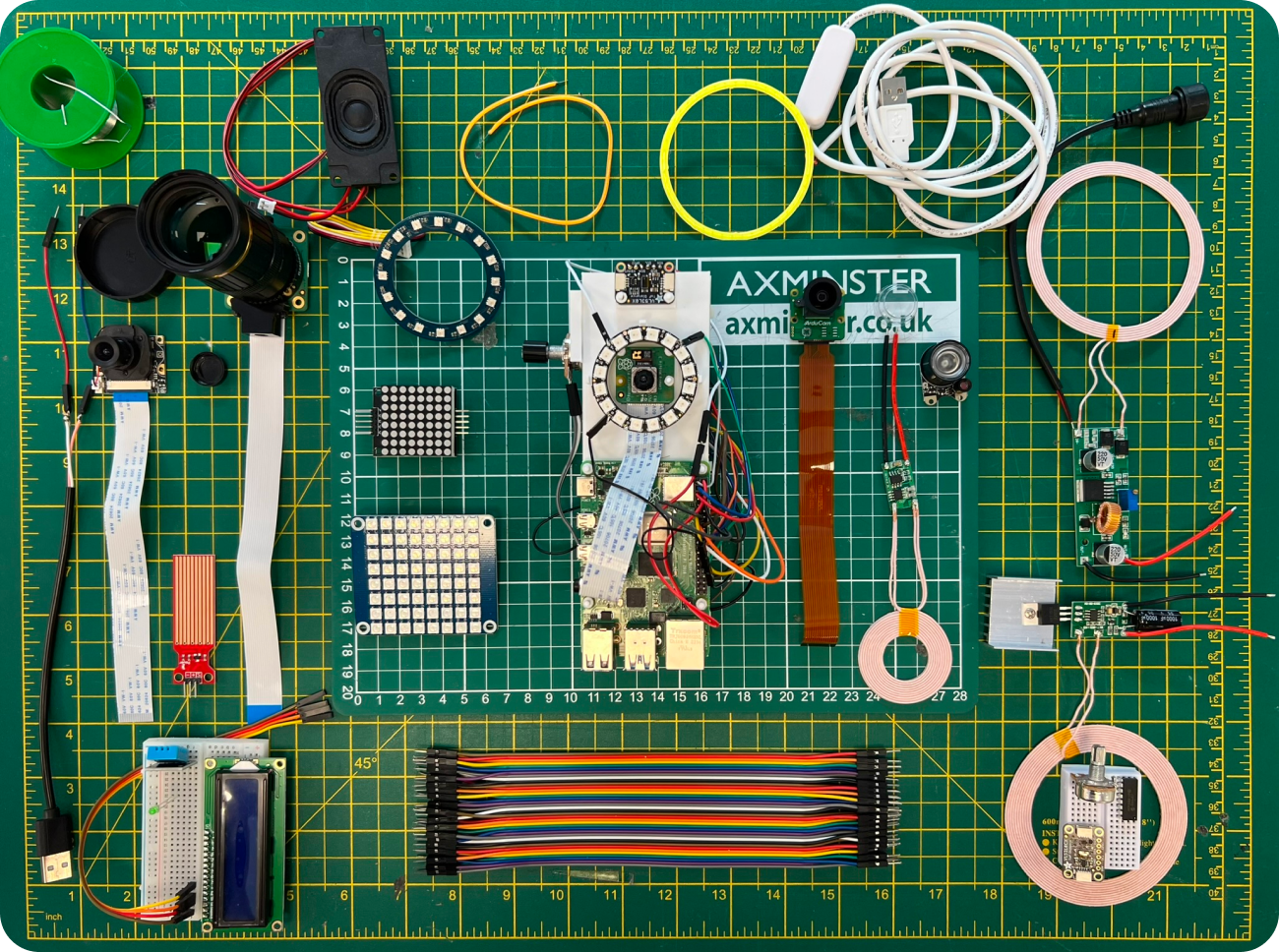

Building a Working Prototype

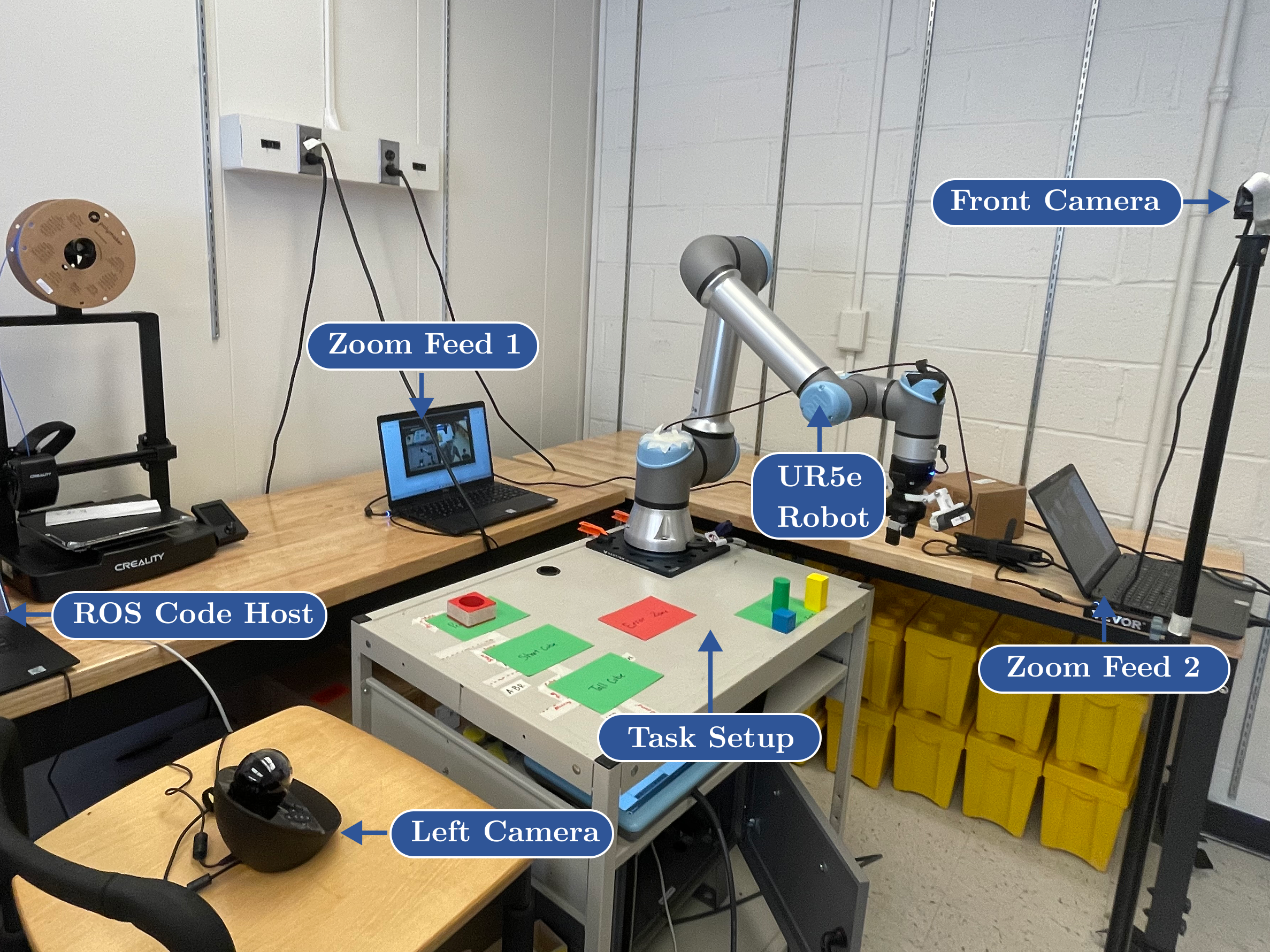

An electronics schematic was constructed for the Raspberry Pi circuit. Cameras were attached to the Raspberry Pi’s SI Camera port via an MIPI DSI flat ribbon cable. The circuit was run via a Python script that enabled variation and testing of cameras, distances, and lighting setups. A sample of electronics was trialled using this method, with power inputs, camera types, and lighting outputs varied across multiple trials to identify the most suitable settings for replicating the final design’s functionality.

Building a Working Prototype

Before finalising prototypes, a full CAD model was constructed. Design for Manufacturing and Assembly (DFMA) principles were incorporated into the finished surfaces through an iterative process that included simplifying, optimising, and refining the industrial design. ABS, aluminium, and rubber composite components were customised for injection moulding, 5-axis CNC machining, or sheet metal folding. Standard parts were utilised whenever feasible, and internal components were modelled to ensure proper tolerancing and assembly sequencing.

Final Prototypes

Form and function; these areas dictated the formulation of two prototypes, a look-alike and a works-like prototype.

Look-a-Like Prototyping

This secondary prototype consisted of a set of look-alike prototypes intended to show users what the device would look and feel like in physical form. A 3D personification of the final CAD model, this prototype serves as a pragmatic way of testing user size, comfort, usability, and other similar physical validation criteria

The App

The UI/UX served as the primary user experience touchpoint in the DermaTrack system’s digital domain, providing access to visualised skin data, history, and education, amongst other fundamental features outlined in the relevant stakeholder specifications.

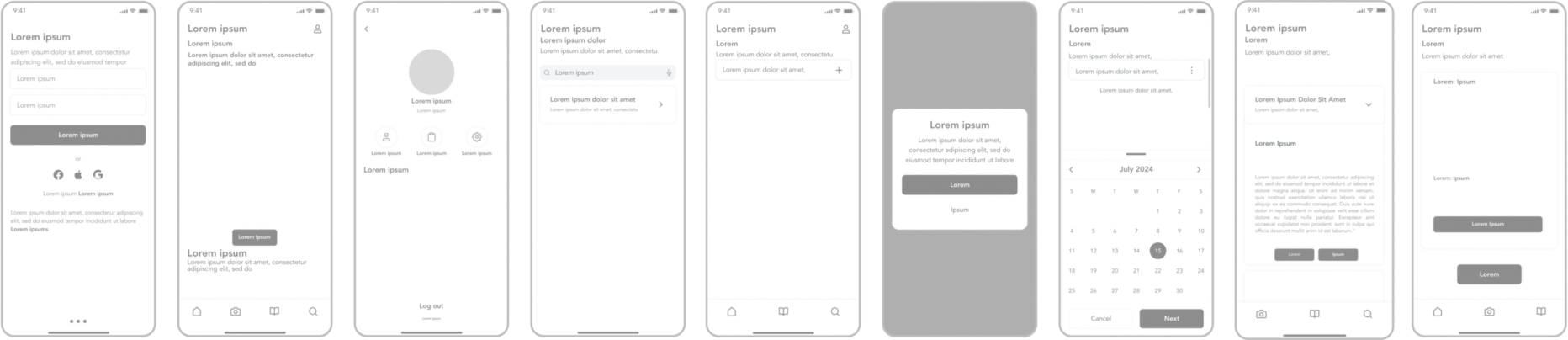

Figma Prototyping.

Figma was used to develop the interface design and interactive prototype.

The Atomic Design Process

The design development process used the atomic design methodology, breaking the UI into small, reusable components. This approach created a structured design system that facilitates easy and systematic modifications. The key features and modifications made in each iteration are outlined below.

Iterations Breed Improvements...

The initial UI design was expected to require optimisation, so an iterative user testing approach was integral to the design development. Five iterations were conducted, each with new participant groups. Workshops included user testing, benchmarking against existing solutions, and evaluation based on predefined criteria. A total of 20 participants were recruited across all iterations.

The Algorithm

A literature review highlighted AI's benefits, noting that shortcomings often arise from external factors like datasets, inputs, and user interaction. Developing a machine learning algorithm offered insights into its role in the final product and specific use cases, enhancing understanding of the project's domain. While not a novel contribution to skin-tracking accuracy, the case-specific machine-learning pipelines demonstrated the functional viability of the DermaTrack ecosystem.

Development Method

Two machine learning pipelines were created for DermaTrack using Python's extensive machine learning and data science libraries. Both pipelines utilised PyTorch's CNN API to build and train models with pre-trained CNNs, enabling transfer learning for faster convergence and improved performance, especially with smaller datasets.

Jupyter Notebooks was hosted on Kaggle to leverage extensive datasets, free GPU resources, and a user-friendly environment. The methodological approach is outlined in the flowchart below.

The Datasets

Pipeline 1 focused on implementing and validating a skin classifier that can distinguish between benign and malignant skin lesions.

The dataset selected is a collection of 13,900 images of skin lesions taken during dermatological examination, chosen for its size, diversity, accessibility and relevance since the metadata for each image file included labels of benign or malignant classification.

The dataset selected is a collection of 13,900 images of skin lesions taken during dermatological examination, chosen for its size, diversity, accessibility and relevance since the metadata for each image file included labels of benign or malignant classification.

Pipeline 2 aimed to extend upon Pipeline 1 by implementing a multi-class model capable of distinguishing between all seven lesion types described in the DermaTrack app. The dataset used for this model was the HAM10000 skin lesion set, supplied as an open-source dataset by Harvard University.

System Validation

The final outputs underwent an evaluation process utilising a mixed methods approach. The aim of evaluation was to consider the efficacy of the outputs in conforming with the original design specifications and objectives. Of the 32 participants in development, 17 were re-recruited to evaluate a different area that they had previously not seen, and 9 additional participants were recruited via surveys.

Product Testing

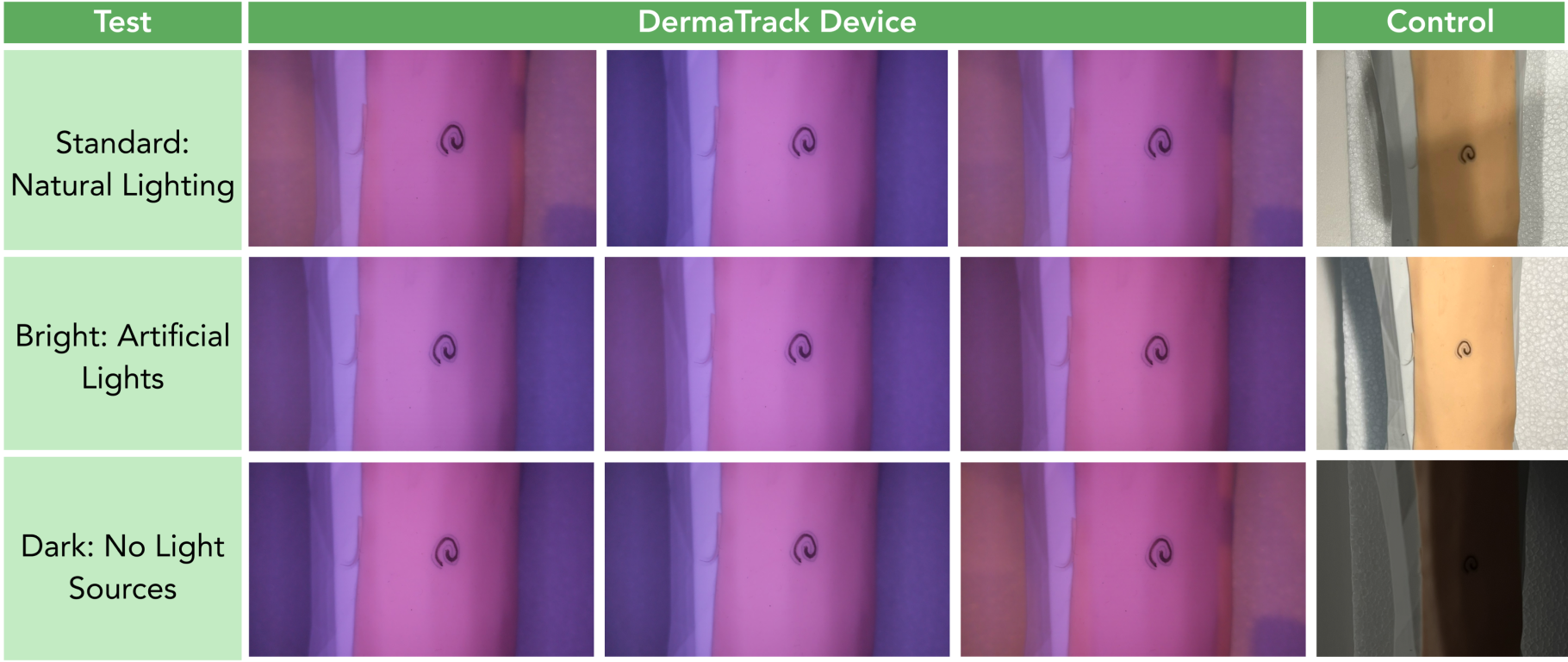

Experiment No.1 evaluated the impact of consistent lighting on image quality using the prototype.

Experiment No.2 involved six participants and evaluated the consistency of image orientation and distance from target lesions.

A typical setup consisted of the arrangement illustrated in the image shown below.

Experiment 1 Results

Compared to the control, the prototype effectively removes shadows in standard lighting, reduces focal flare in bright lighting, and provides illumination in dark conditions. The expert assessment noted “excellent lighting consistency and impressive results, definitely better than the phone.” However, the expert also mentioned a colour shift, suggesting that "a yellow light might be better since the purple hue could misrepresent lesion colours". An example of the device under these conditions is in.

Experiment 2 Results

Images were graded on orientation, distance, and angle, each rated out of 5 by a GP, for a total score out of 15 (see Appendix Fii). Table 8 shows these scores and images, indicating that DermaTrack matches or exceeds typical smartphone consistency.

Smartphone users struggled with distance accuracy, resulting in lower scores compared to DermaTrack (x̄ = 3.6/5 vs. 5.0/5). Users also had inconsistent orientation without guidance; for instance, Participant 2 rotated his device 180° between iterations, and Participants 1 and 5 had orientation variations over 20°.

With orientation instructions, tasks became clearer and less ambiguous. DermaTrack's sensitivity enforced the pre-set working distance, leading to consistently high distance scores (x̄ = 5.0/5).

Algorithm Testing

The performance of each of the models was examined using normalised confusion matrices (Appendix Hi), which show the frequency of misclassifications and the specific classes they were misclassified as. Additionally, training and validation loss and accuracy graphs were plotted to detect over/underfitting.

Algorithm Integration into an App

As a final step, once the performance was evaluated, an external input mechanism was set up to validate the models via a website, mimicking app inputs. Leveraging the most successful folds, two input models were created. This application demonstrated the ability to integrate the pipelines into a platform like the DermaTrack app, outputting relevant metrics such as diagnosis and probability.

Awards

Winner of the IET (Institute of Engineering and Technology) Prize. An award, commencing in the 2019-20 academic year, that is awarded to a student that produces an outstanding engineering technology output in their final project.